From Sparse Landmarks to Dense Pseudo-Correspondence

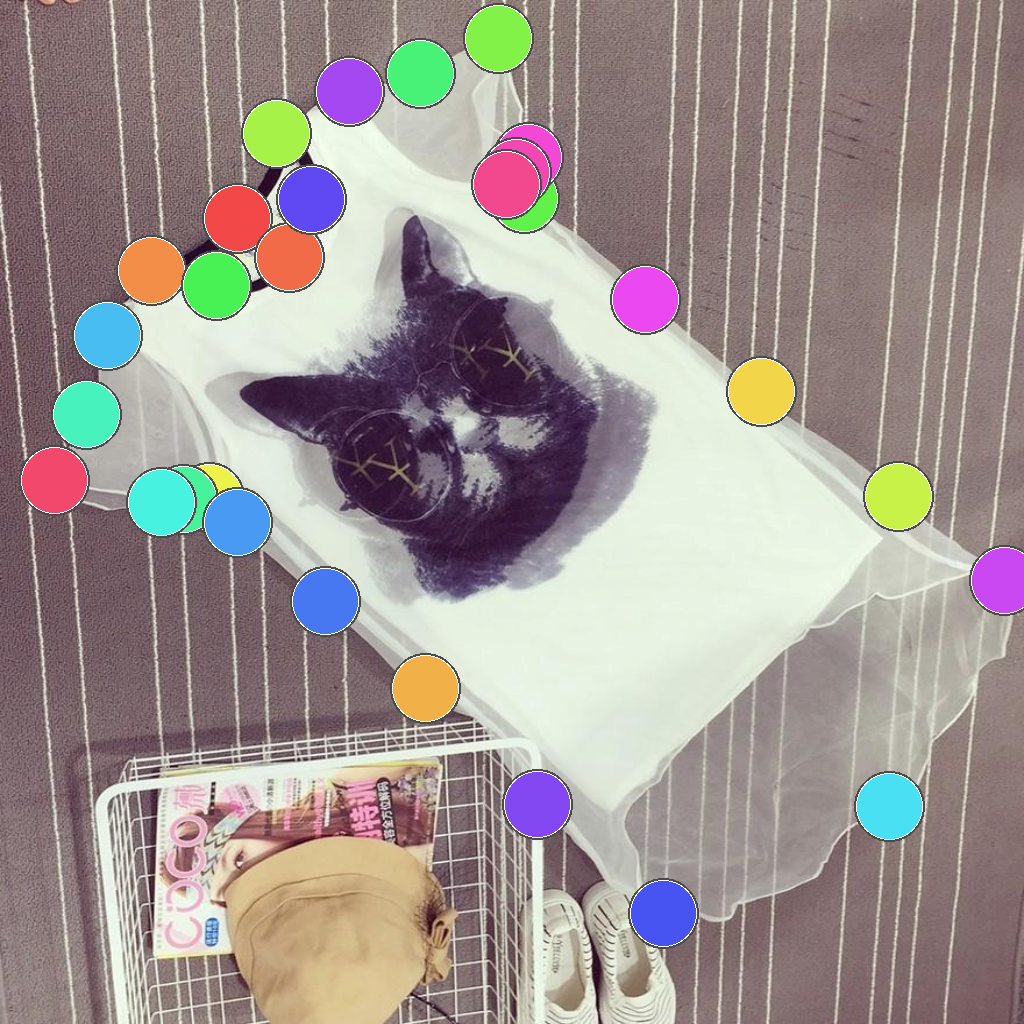

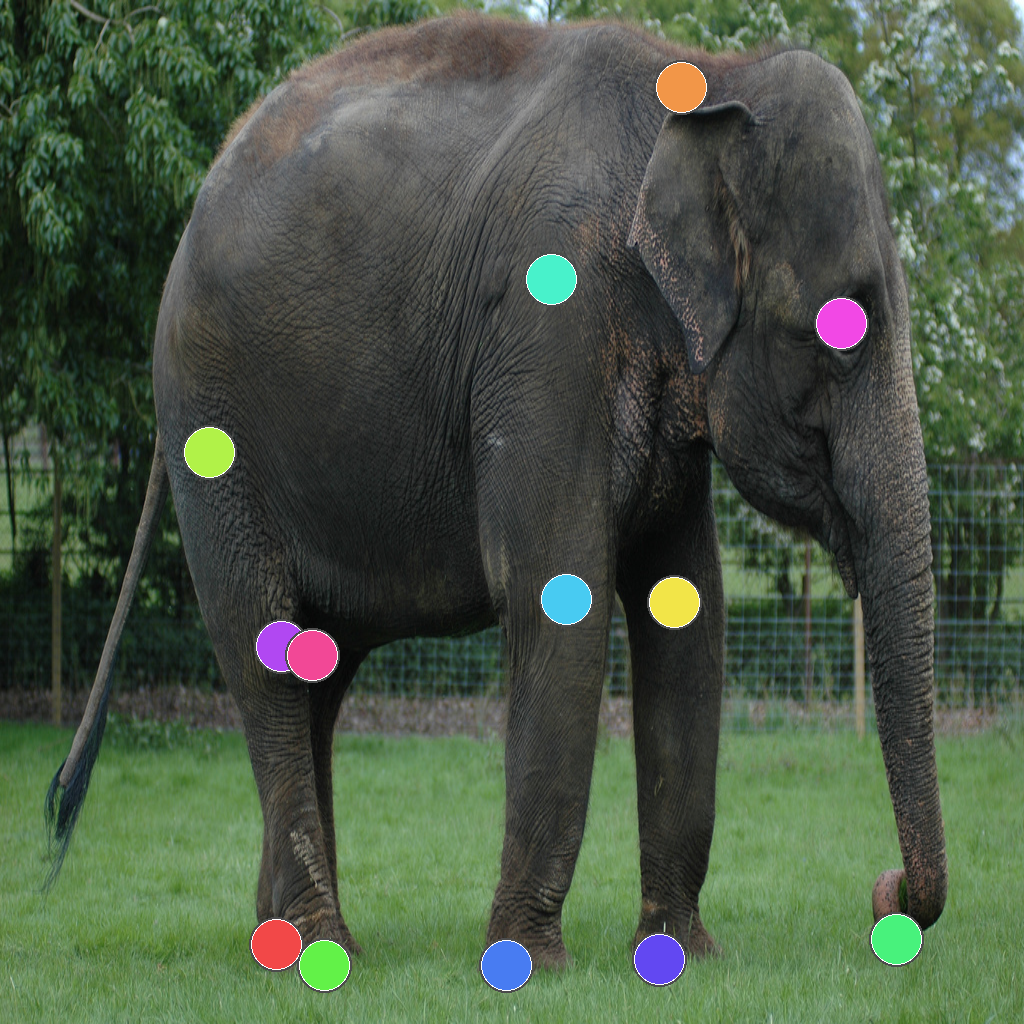

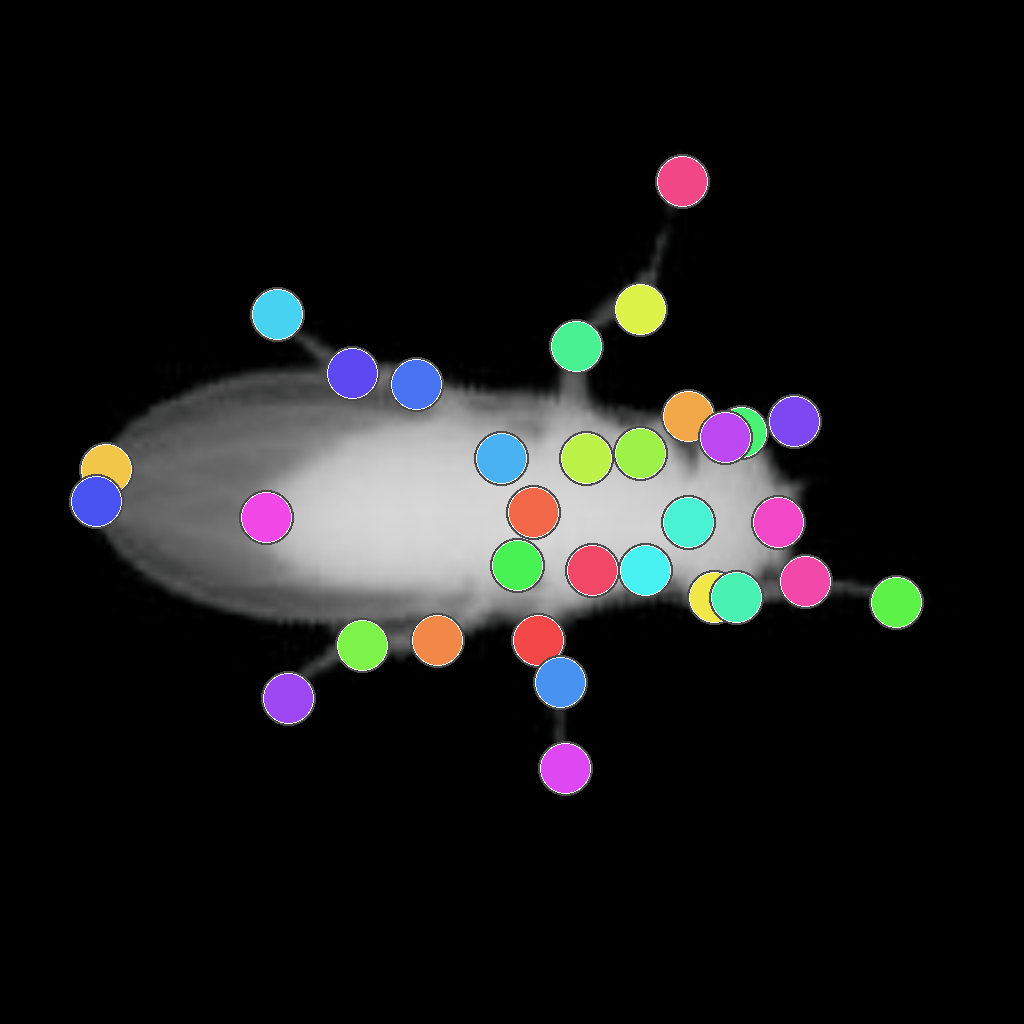

Raw DINOv2 features already contain meaningful correspondence cues across an object. Standard fine-tuning on sparse landmarks improves semantics around the annotated keypoints, but makes the field collapse around them. MARCO propagates supervision across the surface, producing smoother and more geometrically consistent flow.

We consider a source image and a target image. From this pair, we visualize the source-to-target correspondence flow in HSV space, where color encodes the displacement field.

Raw DINOv2 already produces a partially coherent correspondence field

This is the key observation behind MARCO: instead of discarding that structure, we use it as a source of self-supervision and to progressively densify correspondences during training.